1292:). However, in the GPLVM the mapping is from the embedded(latent) space to the data space (like density networks and GTM) whereas in kernel PCA it is in the opposite direction. It was originally proposed for visualization of high dimensional data but has been extended to construct a shared manifold model between two observation spaces. GPLVM and its many variants have been proposed specially for human motion modeling, e.g., back constrained GPLVM, GP dynamic model (GPDM), balanced GPDM (B-GPDM) and topologically constrained GPDM. To capture the coupling effect of the pose and gait manifolds in the gait analysis, a multi-layer joint gait-pose manifolds was proposed.

3199:-nearest neighbors and tries to seek an embedding that preserves relationships in local neighborhoods. It slowly scales variance out of higher dimensions, while simultaneously adjusting points in lower dimensions to preserve those relationships. If the rate of scaling is small, it can find very precise embeddings. It boasts higher empirical accuracy than other algorithms with several problems. It can also be used to refine the results from other manifold learning algorithms. It struggles to unfold some manifolds, however, unless a very slow scaling rate is used. It has no model.

3219:(TCIE) is an algorithm based on approximating geodesic distances after filtering geodesics inconsistent with the Euclidean metric. Aimed at correcting the distortions caused when Isomap is used to map intrinsically non-convex data, TCIE uses weight least-squares MDS in order to obtain a more accurate mapping. The TCIE algorithm first detects possible boundary points in the data, and during computation of the geodesic length marks inconsistent geodesics, to be given a small weight in the weighted

1240:-nearest neighbors of every point. It then seeks to solve the problem of maximizing the distance between all non-neighboring points, constrained such that the distances between neighboring points are preserved. The primary contribution of this algorithm is a technique for casting this problem as a semidefinite programming problem. Unfortunately, semidefinite programming solvers have a high computational cost. Like Locally Linear Embedding, it has no internal model.

1288:(GPLVM) are probabilistic dimensionality reduction methods that use Gaussian Processes (GPs) to find a lower dimensional non-linear embedding of high dimensional data. They are an extension of the Probabilistic formulation of PCA. The model is defined probabilistically and the latent variables are then marginalized and parameters are obtained by maximizing the likelihood. Like kernel PCA they use a kernel function to form a non linear mapping (in the form of a

20:

555:

78:

1257:

learn to encode the vector into a small number of dimensions and then decode it back into the original space. Thus, the first half of the network is a model which maps from high to low-dimensional space, and the second half maps from low to high-dimensional space. Although the idea of autoencoders is quite old, training of deep autoencoders has only recently become possible through the use of

156:

95:(the letter 'A') and recover only the varying information (rotation and scale). The image to the right shows sample images from this dataset (to save space, not all input images are shown), and a plot of the two-dimensional points that results from using a NLDR algorithm (in this case, Manifold Sculpting was used) to reduce the data into just two dimensions.

629:). The graph thus generated can be considered as a discrete approximation of the low-dimensional manifold in the high-dimensional space. Minimization of a cost function based on the graph ensures that points close to each other on the manifold are mapped close to each other in the low-dimensional space, preserving local distances. The eigenfunctions of the

657:(MDS). Classic MDS takes a matrix of pair-wise distances between all points and computes a position for each point. Isomap assumes that the pair-wise distances are only known between neighboring points, and uses the Floyd–Warshall algorithm to compute the pair-wise distances between all other points. This effectively estimates the full matrix of pair-wise

686:

technique to find the low-dimensional embedding of points, such that each point is still described with the same linear combination of its neighbors. LLE tends to handle non-uniform sample densities poorly because there is no fixed unit to prevent the weights from drifting as various regions differ in sample densities. LLE has no internal model.

124:. In particular, if there is an attracting invariant manifold in the phase space, nearby trajectories will converge onto it and stay on it indefinitely, rendering it a candidate for dimensionality reduction of the dynamical system. While such manifolds are not guaranteed to exist in general, the theory of

50:, with the goal of either visualizing the data in the low-dimensional space, or learning the mapping (either from the high-dimensional space to the low-dimensional embedding or vice versa) itself. The techniques described below can be understood as generalizations of linear decomposition methods used for

3207:

RankVisu is designed to preserve rank of neighborhood rather than distance. RankVisu is especially useful on difficult tasks (when the preservation of distance cannot be achieved satisfyingly). Indeed, the rank of neighborhood is less informative than distance (ranks can be deduced from distances but

1199:

of the original weights produced by LLE. The creators of this regularised variant are also the authors of Local

Tangent Space Alignment (LTSA), which is implicit in the MLLE formulation when realising that the global optimisation of the orthogonal projections of each weight vector, in-essence, aligns

2796:

defines a random walk on the data set which means that the kernel captures some local geometry of data set. The Markov chain defines fast and slow directions of propagation through the kernel values. As the walk propagates forward in time, the local geometry information aggregates in the same way as

1256:

which is trained to approximate the identity function. That is, it is trained to map from a vector of values to the same vector. When used for dimensionality reduction purposes, one of the hidden layers in the network is limited to contain only a small number of network units. Thus, the network must

110:, which is a linear dimensionality reduction algorithm, is used to reduce this same dataset into two dimensions, the resulting values are not so well organized. This demonstrates that the high-dimensional vectors (each representing a letter 'A') that sample this manifold vary in a non-linear manner.

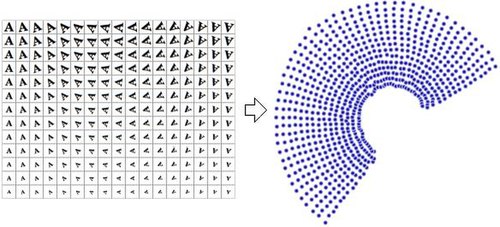

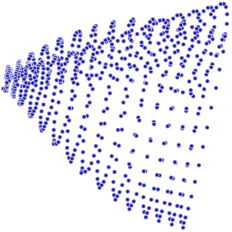

94:

is two, because two variables (rotation and scale) were varied in order to produce the data. Information about the shape or look of a letter 'A' is not part of the intrinsic variables because it is the same in every instance. Nonlinear dimensionality reduction will discard the correlated information

1320:

algorithm. The algorithm finds a configuration of data points on a manifold by simulating a multi-particle dynamic system on a closed manifold, where data points are mapped to particles and distances (or dissimilarity) between data points represent a repulsive force. As the manifold gradually grows

624:

Traditional techniques like principal component analysis do not consider the intrinsic geometry of the data. Laplacian eigenmaps builds a graph from neighborhood information of the data set. Each data point serves as a node on the graph and connectivity between nodes is governed by the proximity of

70:

High dimensional data can be hard for machines to work with, requiring significant time and space for analysis. It also presents a challenge for humans, since it's hard to visualize or understand data in more than three dimensions. Reducing the dimensionality of a data set, while keep its essential

1452:

It should be noticed that CCA, as an iterative learning algorithm, actually starts with focus on large distances (like the Sammon algorithm), then gradually change focus to small distances. The small distance information will overwrite the large distance information, if compromises between the two

637:

on the unit circle manifold). Attempts to place

Laplacian eigenmaps on solid theoretical ground have met with some success, as under certain nonrestrictive assumptions, the graph Laplacian matrix has been shown to converge to the Laplace–Beltrami operator as the number of points goes to infinity.

1483:

learns a smooth diffeomorphic mapping which transports the data onto a lower-dimensional linear subspace. The methods solves for a smooth time indexed vector field such that flows along the field which start at the data points will end at a lower-dimensional linear subspace, thereby attempting to

1495:

takes advantage of the assumption that disparate data sets produced by similar generating processes will share a similar underlying manifold representation. By learning projections from each original space to the shared manifold, correspondences are recovered and knowledge from one domain can be

601:

in his 1984 thesis, which he formally introduced in 1989. This idea has been explored further by many authors. How to define the "simplicity" of the manifold is problem-dependent, however, it is commonly measured by the intrinsic dimensionality and/or the smoothness of the manifold. Usually, the

685:

algorithms, and better results with many problems. LLE also begins by finding a set of the nearest neighbors of each point. It then computes a set of weights for each point that best describes the point as a linear combination of its neighbors. Finally, it uses an eigenvector-based optimization

113:

It should be apparent, therefore, that NLDR has several applications in the field of computer-vision. For example, consider a robot that uses a camera to navigate in a closed static environment. The images obtained by that camera can be considered to be samples on a manifold in high-dimensional

85:

The reduced-dimensional representations of data are often referred to as "intrinsic variables". This description implies that these are the values from which the data was produced. For example, consider a dataset that contains images of a letter 'A', which has been scaled and rotated by varying

3165:

to train a multi-layer perceptron (MLP) to fit to a manifold. Unlike typical MLP training, which only updates the weights, NLPCA updates both the weights and the inputs. That is, both the weights and inputs are treated as latent values. After training, the latent inputs are a low-dimensional

538:

must be chosen such that it has a known corresponding kernel. Unfortunately, it is not trivial to find a good kernel for a given problem, so KPCA does not yield good results with some problems when using standard kernels. For example, it is known to perform poorly with these kernels on the

2025:

596:

give the natural geometric framework for nonlinear dimensionality reduction and extend the geometric interpretation of PCA by explicitly constructing an embedded manifold, and by encoding using standard geometric projection onto the manifold. This approach was originally proposed by

616:

Laplacian eigenmaps uses spectral techniques to perform dimensionality reduction. This technique relies on the basic assumption that the data lies in a low-dimensional manifold in a high-dimensional space. This algorithm cannot embed out-of-sample points, but techniques based on

1303:(t-SNE) is widely used. It is one of a family of stochastic neighbor embedding methods. The algorithm computes the probability that pairs of datapoints in the high-dimensional space are related, and then chooses low-dimensional embeddings which produce a similar distribution.

602:

principal manifold is defined as a solution to an optimization problem. The objective function includes a quality of data approximation and some penalty terms for the bending of the manifold. The popular initial approximations are generated by linear PCA and

Kohonen's SOM.

474:

1129:

838:

1185:

is also based on sparse matrix techniques. It tends to yield results of a much higher quality than LLE. Unfortunately, it has a very costly computational complexity, so it is not well-suited for heavily sampled manifolds. It has no internal model.

128:

gives conditions for the existence of unique attracting invariant objects in a broad class of dynamical systems. Active research in NLDR seeks to unfold the observation manifolds associated with dynamical systems to develop modeling techniques.

1236:, Isomap and Locally Linear Embedding share a common intuition relying on the notion that if a manifold is properly unfolded, then variance over the points is maximized. Its initial step, like Isomap and Locally Linear Embedding, is finding the

1194:

Modified LLE (MLLE) is another LLE variant which uses multiple weights in each neighborhood to address the local weight matrix conditioning problem which leads to distortions in LLE maps. Loosely speaking the multiple weights are the local

669:

that is embedded inside of a higher-dimensional vector space. The main intuition behind MVU is to exploit the local linearity of manifolds and create a mapping that preserves local neighbourhoods at every point of the underlying manifold.

354:

661:

between all of the points. Isomap then uses classic MDS to compute the reduced-dimensional positions of all the points. Landmark-Isomap is a variant of this algorithm that uses landmarks to increase speed, at the cost of some accuracy.

1519:); an analogy is drawn between the diffusion operator on a manifold and a Markov transition matrix operating on functions defined on the graph whose nodes were sampled from the manifold. In particular, let a data set be represented by

1872:

2959:

3216:

1611:

3178:

and curvilinear component analysis except that (1) it simultaneously penalizes false neighborhoods and tears by focusing on small distances in both original and output space, and that (2) it accounts for

543:

manifold. However, one can view certain other methods that perform well in such settings (e.g., Laplacian

Eigenmaps, LLE) as special cases of kernel PCA by constructing a data-dependent kernel matrix.

1285:

633:

on the manifold serve as the embedding dimensions, since under mild conditions this operator has a countable spectrum that is a basis for square integrable functions on the manifold (compare to

1824:

2304:

2209:

957:

1445:(CCA) looks for the configuration of points in the output space that preserves original distances as much as possible while focusing on small distances in the output space (conversely to

27:) with a rectangular hole in the middle. Top-right: the original 2D manifold used to generate the 3D dataset. Bottom left and right: 2D recoveries of the manifold respectively using the

4717:

S. Lespinats, M. Verleysen, A. Giron, B. Fertil, DD-HDS: a tool for visualization and exploration of high-dimensional data, IEEE Transactions on Neural

Networks 18 (5) (2007) 1265–1279.

81:

Plot of the two-dimensional points that results from using a NLDR algorithm. In this case, Manifold

Sculpting is used to reduce the data into just two dimensions (rotation and scale).

86:

amounts. Each image has 32×32 pixels. Each image can be represented as a vector of 1024 pixel values. Each row is a sample on a two-dimensional manifold in 1024-dimensional space (a

369:

1442:

1021:

730:

516:

2841:

2690:

99:

2068:

2522:

1755:

1693:

1633:

262:

3081:

3031:

2451:

681:(LLE) was presented at approximately the same time as Isomap. It has several advantages over Isomap, including faster optimization when implemented to take advantage of

4732:, In Platt, J.C. and Koller, D. and Singer, Y. and Roweis, S., editor, Advances in Neural Information Processing Systems 20, pp. 513–520, MIT Press, Cambridge, MA, 2008

240:

2340:

2245:

678:

2360:

2150:

1217:

is based on the intuition that when a manifold is correctly unfolded, all of the tangent hyperplanes to the manifold will become aligned. It begins by computing the

269:

2342:. The former means that very little diffusion has taken place while the latter implies that the diffusion process is nearly complete. Different strategies to choose

3135:

3108:

2989:

2717:

2630:

2603:

2576:

2549:

2122:

2095:

1402:

536:

2797:

local transitions (defined by differential equations) of the dynamical system. The metaphor of diffusion arises from the definition of a family diffusion distance

1839:

as defining some sort of affinity on that graph. The graph is symmetric by construction since the kernel is symmetric. It is easy to see here that from the tuple (

1657:

1428:

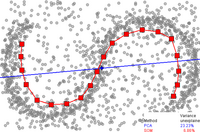

2794:

2764:

2737:

2473:

2406:

2383:

2132:. Since the exact structure of the manifold is not available, for the nearest neighbors the geodesic distance is approximated by euclidean distance. The choice

363:

eigenvectors of that matrix. By comparison, KPCA begins by computing the covariance matrix of the data after being transformed into a higher-dimensional space,

1200:

the local tangent spaces of every data point. The theoretical and empirical implications from the correct application of this algorithm are far-reaching.

102:

PCA (a linear dimensionality reduction algorithm) is used to reduce this same dataset into two dimensions, the resulting values are not so well organized.

1496:

transferred to another. Most manifold alignment techniques consider only two data sets, but the concept extends to arbitrarily many initial data sets.

1324:

Relational perspective map was inspired by a physical model in which positively charged particles move freely on the surface of a ball. Guided by the

201:

3880:

591:

2020:{\displaystyle K_{ij}={\begin{cases}e^{-\|x_{i}-x_{j}\|_{2}^{2}/\sigma ^{2}}&{\text{if }}x_{i}\sim x_{j}\\0&{\text{otherwise}}\end{cases}}}

1321:

in size the multi-particle system cools down gradually and converges to a configuration that reflects the distance information of the data points.

3750:

621:

regularization exist for adding this capability. Such techniques can be applied to other nonlinear dimensionality reduction algorithms as well.

3137:

takes into account all the relation between points x and y while calculating the distance and serves as a better notion of proximity than just

3463:

Haller, George; Ponsioen, Sten (2016). "Nonlinear normal modes and spectral submanifolds: Existence, uniqueness and use in model reduction".

3166:

representation of the observed vectors, and the MLP maps from that low-dimensional representation to the high-dimensional observation space.

1182:

2848:

1262:

1331:

between particles, the minimal energy configuration of the particles will reflect the strength of repulsive forces between the particles.

1300:

1468:

in its embedding. It is based on

Curvilinear Component Analysis (which extended Sammon's mapping), but uses geodesic distances instead.

1522:

4797:

McInnes, Leland; Healy, John; Melville, James (2018-12-07). "Uniform manifold approximation and projection for dimension reduction".

3721:

3231:

Uniform manifold approximation and projection (UMAP) is a nonlinear dimensionality reduction technique. Visually, it is similar to

2774:

is that only local features of the data are considered in diffusion maps as opposed to taking correlations of the entire data set.

1273:(see above) to learn a non-linear mapping from the high-dimensional to the embedded space. The mappings in NeuroScale are based on

3641:

3294:

is an open source C++ library containing implementations of LLE, Manifold

Sculpting, and some other manifold learning algorithms.

4165:

Zhang, Zhenyue; Hongyuan Zha (2005). "Principal

Manifolds and Nonlinear Dimension Reduction via Local Tangent Space Alignment".

3616:

Ham, Jihun; Lee, Daniel D.; Mika, Sebastian; Schölkopf, Bernhard. "A kernel view of the dimensionality reduction of manifolds".

1225:-first principal components in each local neighborhood. It then optimizes to find an embedding that aligns the tangent spaces.

3639:

Gorban, A. N.; Zinovyev, A. (2010). "Principal manifolds and graphs in practice: from molecular biology to dynamical systems".

1613:. The underlying assumption of diffusion map is that the high-dimensional data lies on a low-dimensional manifold of dimension

4915:

4859:

3791:

3557:

3447:

200:

based on a non-linear mapping from the embedded space to the high-dimensional space. These techniques are related to work on

4910:

3401:

4905:

546:

KPCA has an internal model, so it can be used to map points onto its embedding that were not available at training time.

213:

4756:

3879:

Bengio, Yoshua; Paiement, Jean-Francois; Vincent, Pascal; Delalleau, Olivier; Le Roux, Nicolas; Ouimet, Marie (2004).

1769:

3895:

3831:

4890:

2250:

2155:

912:

71:

features relatively intact, can make algorithms more efficient and allow analysts to visualize trends and patterns.

618:

172:

1161:

nonzero eigen vectors provide an orthogonal set of coordinates. Generally the data points are reconstructed from

578:

incidence. Different forms and colors correspond to various geographical locations. Red bold line represents the

487:

to factor away much of the computation, such that the entire process can be performed without actually computing

4508:

4211:

1150:

193:

77:

4880:

3912:

469:{\displaystyle C={\frac {1}{m}}\sum _{i=1}^{m}{\Phi (\mathbf {x} _{i})\Phi (\mathbf {x} _{i})^{\mathsf {T}}}.}

3312:

1274:

1214:

1209:

630:

4444:

1124:{\displaystyle C(Y)=\sum _{i}\left|\mathbf {Y} _{i}-\sum _{j}{\mathbf {W} _{ij}\mathbf {Y} _{j}}\right|^{2}}

833:{\displaystyle E(W)=\sum _{i}\left|\mathbf {X} _{i}-\sum _{j}{\mathbf {W} _{ij}\mathbf {X} _{j}}\right|^{2}}

4935:

3777:

3301:

2771:

1258:

650:

626:

484:

168:

107:

59:

55:

4373:

Taylor, D.; Klimm, F.; Harrington, H. A.; Kramár, M.; Mischaikow, K.; Porter, M. A.; Mucha, P. J. (2015).

3254:

A method based on proximity matrices is one where the data is presented to the algorithm in the form of a

1362:

Contagion maps use multiple contagions on a network to map the nodes as a point cloud. In the case of the

4875:

3368:

3263:

1325:

490:

114:

space, and the intrinsic variables of that manifold will represent the robot's position and orientation.

4574:

2800:

2639:

46:, is any of various related techniques that aim to project high-dimensional data onto lower-dimensional

3275:

1233:

4819:"UMAP: Uniform Manifold Approximation and Projection for Dimension Reduction — umap 0.3 documentation"

4742:

4486:

4332:

3530:

4616:

4445:"Curvilinear Component Analysis: A Self-Organizing Neural Network for Nonlinear Mapping of Data Sets"

4306:

3969:

Roweis, S. T.; Saul, L. K. (2000). "Nonlinear

Dimensionality Reduction by Locally Linear Embedding".

3867:

3859:

3397:"A unifying probabilistic perspective for spectral dimensionality reduction: insights and new models"

3348:

3271:

2033:

4237:"Probabilistic Non-linear Principal Component Analysis with Gaussian Process Latent Variable Models"

4179:

2478:

1897:

1701:

3180:

3150:

2991:

defines a distance between any two points of the data set based on path connectivity: the value of

1674:

1317:

1266:

654:

51:

4085:

NIPS'06: Proceedings of the 19th International Conference on Neural Information Processing Systems

3517:. Proceedings of the International Joint Conference on Neural Networks IJCNN'11. pp. 1959–66.

1616:

1221:-nearest neighbors of every point. It computes the tangent space at every point by computing the

245:

3698:

611:

3812:

3044:

2994:

2414:

349:{\displaystyle C={\frac {1}{m}}\sum _{i=1}^{m}{\mathbf {x} _{i}\mathbf {x} _{i}^{\mathsf {T}}}.}

4174:

3742:

3308:

3279:

3192:

563:

558:

Application of principal curves: Nonlinear quality of life index. Points represent data of the

219:

4847:

3574:

2309:

2214:

3552:. Lecture Notes in Computer Science and Engineering. Vol. 58. Springer. pp. 68–95.

3353:

2345:

2135:

1363:

1196:

197:

4900:

3266:. The variations tend to be differences in how the proximity data is computed; for example,

999:

dimensional space. A neighborhood preserving map is created based on this idea. Each point X

4396:

4375:"Topological data analysis of contagion maps for examining spreading processes on networks"

4033:

3978:

3927:

3420:

3338:

3113:

3086:

2967:

2695:

2608:

2581:

2554:

2527:

2100:

2073:

1369:

521:

171:

is presented by a blue straight line. Data points are the small grey circles. For PCA, the

125:

4695:

4530:

3282:(which is not in fact a mapping) are examples of metric multidimensional scaling methods.

1642:

707:. The original point is reconstructed by a linear combination, given by the weight matrix

8:

3618:

Proceedings of the 21st International Conference on Machine Learning, Banff, Canada, 2004

3374:

3343:

3333:

3239:

3220:

3175:

1446:

1407:

1142:

to optimize the coordinates. This minimization problem can be solved by solving a sparse

185:

160:

149:

91:

4400:

4037:

3982:

3931:

3712:

3424:

1835:

Thus one can think of the individual data points as the nodes of a graph and the kernel

4798:

4779:

4597:

4417:

4386:

4374:

4355:

4287:

4236:

4147:

4134:

4111:

4099:

4002:

3951:

3676:

3650:

3598:

3490:

3472:

3410:

3138:

2779:

2749:

2722:

2458:

2391:

2368:

1851:. This technique is common to a variety of fields and is known as the graph Laplacian.

1492:

1166:

117:

4056:

4021:

3509:

1464:

CDA trains a self-organizing neural network to fit the manifold and seeks to preserve

1334:

The Relational perspective map was introduced in. The algorithm firstly used the flat

4855:

4757:"Nonlinear Dimensionality Reduction by Topologically Constrained Isometric Embedding"

4700:

4649:

4467:

4422:

4279:

4217:

4207:

4151:

4139:

4080:

4061:

3994:

3955:

3943:

3891:

3837:

3827:

3787:

3786:. Lecture Notes in Computer Science and Engineering (LNCSE). Vol. 58. Springer.

3781:

3668:

3553:

3443:

3255:

3243:

3236:

1465:

665:

In manifold learning, the input data is assumed to be sampled from a low dimensional

658:

121:

4728:

4685:

4668:

4601:

4291:

3494:

3153:

in local regions, and then uses convex optimization to fit all the pieces together.

4783:

4771:

4690:

4680:

4641:

4589:

4459:

4412:

4404:

4359:

4347:

4271:

4184:

4129:

4121:

4100:"Locally Linear Embedding and fMRI feature selection in psychiatric classification"

4051:

4041:

4006:

3986:

3935:

3881:"Out-of-Sample Extensions for LLE, Isomap, MDS, Eigenmaps, and Spectral Clustering"

3763:

3759:

3694:

3680:

3660:

3621:

3602:

3590:

3482:

3363:

1347:

1328:

1289:

966:

dimensional space and the goal of the algorithm is to reduce the dimensionality to

571:

4022:"Hessian eigenmaps: Locally linear embedding techniques for high-dimensional data"

3990:

3939:

3396:

4645:

4556:

4550:

4351:

3259:

3162:

567:

47:

582:, approximating the dataset. This principal curve was produced by the method of

4818:

4593:

4259:

4125:

3594:

3297:

1270:

1253:

634:

559:

4775:

4275:

4188:

3664:

3486:

4929:

4221:

3808:

3738:

3208:

distances cannot be deduced from ranks) and its preservation is thus easier.

2386:

1504:

1476:

1456:

The stress function of CCA is related to a sum of right Bregman divergences.

682:

598:

87:

4046:

3841:

3625:

3183:

phenomenon by adapting the weighting function to the distance distribution.

870:. The cost function is minimized under two constraints: (a) Each data point

4704:

4653:

4471:

4426:

4283:

4143:

4065:

3998:

3947:

3672:

1848:

1516:

1351:

716:, of its neighbors. The reconstruction error is given by the cost function

575:

562:

171 countries in 4-dimensional space formed by the values of 4 indicators:

4202:

DeMers, D.; Cottrell, G.W. (1993). "Non-linear dimensionality reduction".

3813:"Laplacian Eigenmaps and Spectral Techniques for Embedding and Clustering"

1484:

preserve pairwise differences under both the forward and inverse mapping.

995:

dimensional space will be used to reconstruct the same point in the lower

4895:

4538:. The 25th International Conference on Machine Learning. pp. 1120–7.

3358:

1512:

1249:

583:

164:

4885:

4408:

3577:(1998). "Nonlinear Component Analysis as a Kernel Eigenvalue Problem".

3545:

1169:. For such an implementation the algorithm has only one free parameter

540:

24:

4463:

2954:{\displaystyle D_{t}^{2}(x,y)=\|p_{t}(x,\cdot )-p_{t}(y,\cdot )\|^{2}}

23:

Top-left: a 3D dataset of 1000 points in a spiraling band (a.k.a. the

4729:

Iterative Non-linear Dimensionality Reduction with Manifold Sculpting

4260:"Multilayer Joint Gait-Pose Manifolds for Human Gait Motion Modeling"

3913:"A Global Geometric Framework for Nonlinear Dimensionality Reduction"

3586:

1508:

1366:

the speed of the spread can be adjusted with the threshold parameter

19:

3174:

Data-driven high-dimensional scaling (DD-HDS) is closely related to

212:

Perhaps the most widely used algorithm for dimensional reduction is

4803:

4333:"Visualizing high-dimensional data with relational perspective map"

4116:

3546:"3. Learning Nonlinear Principal Manifolds by Self-Organising Maps"

3477:

3316:

2129:

2125:

1261:

and stacked denoising autoencoders. Related to autoencoders is the

666:

554:

32:

4667:

Scholz, M.; Kaplan, F.; Guy, C. L.; Kopka, J.; Selbig, J. (2005).

4391:

3866:

Matlab code for Laplacian Eigenmaps can be found in algorithms at

3783:

Principal Manifolds for Data Visualisation and Dimension Reduction

3655:

3550:

Principal Manifolds for Data Visualization and Dimension Reduction

3415:

3291:

2578:

in one time step. Similarly the probability of transitioning from

1338:

as the image manifold, then it has been extended (in the software

4755:

Rosman, G.; Bronstein, M.M.; Bronstein, A.M.; Kimmel, R. (2010).

3110:

is much more robust to noise in the data than geodesic distance.

1176:

196:(GTM) use a point representation in the embedded space to form a

35:

algorithms as implemented by the Modular Data Processing toolkit.

4104:

IEEE Journal of Translational Engineering in Health and Medicine

4081:"MLLE: Modified Locally Linear Embedding Using Multiple Weights"

3720:(PhD). Stanford Linear Accelerator Center, Stanford University.

3548:. In Gorban, A.N.; Kégl, B.; Wunsch, D.C.; Zinovyev, A. (eds.).

3232:

3226:

3211:

1606:{\displaystyle \mathbf {X} =\in \Omega \subset \mathbf {R^{D}} }

4632:

Venna, J.; Kaski, S. (2006). "Local multidimensional scaling".

3531:"Principal Component Analysis and Self-Organizing Maps: applet"

3320:

3267:

1431:

1343:

646:

4754:

3878:

1295:

98:

3235:, but it assumes that the data is uniformly distributed on a

3195:

to find an embedding. Like other algorithms, it computes the

2128:

distance should be used to actually measure distances on the

1335:

1134:

In this cost function, unlike the previous one, the weights W

906:

and (b) The sum of every row of the weight matrix equals 1.

3864:(PhD). Department of Mathematics, The University of Chicago.

204:, which also are based around the same probabilistic model.

4372:

2013:

1189:

1138:

are kept fixed and the minimization is done on the points Y

2766:

constitutes some notion of local geometry of the data set

1667:

which represents some notion of affinity of the points in

1280:

155:

3910:

1471:

1339:

879:

is reconstructed only from its neighbors, thus enforcing

4920:

4494:

European Symposium on Artificial Neural Networks (Esann)

4487:"Curvilinear component analysis and Bregman divergences"

3776:

483:

eigenvectors of that matrix, just like PCA. It uses the

4745:, Neurocomputing, vol. 72 (13–15), pp. 2964–2978, 2009.

3246:

is locally constant or approximately locally constant.

3169:

2152:

modulates our notion of proximity in the sense that if

216:. PCA begins by computing the covariance matrix of the

4741:

Lespinats S., Fertil B., Villemain P. and Herault J.,

4528:

3911:

Tenenbaum, J B.; de Silva, V.; Langford, J.C. (2000).

3780:; Kégl, B.; Wunsch, D. C.; Zinovyev, A., eds. (2007).

3249:

207:

159:

Approximation of a principal curve by one-dimensional

152:

is one of the first and most popular NLDR techniques.

3116:

3089:

3047:

2997:

2970:

2851:

2803:

2782:

2752:

2725:

2698:

2642:

2611:

2584:

2557:

2530:

2481:

2461:

2417:

2394:

2371:

2348:

2312:

2253:

2217:

2158:

2138:

2103:

2076:

2036:

1875:

1772:

1704:

1677:

1645:

1619:

1525:

1410:

1372:

1024:

915:

733:

524:

493:

479:

It then projects the transformed data onto the first

372:

272:

248:

222:

120:

are of general interest for model order reduction in

4796:

4666:

4304:

3572:

3262:. These methods all fall under the broader class of

689:

LLE computes the barycentric coordinates of a point

3300:implements the method for the programming language

1449:which focus on small distances in original space).

1265:algorithm, which uses stress functions inspired by

16:

Projection of data onto lower-dimensional manifolds

3527:The illustration is prepared using free software:

3129:

3102:

3075:

3025:

2983:

2953:

2835:

2788:

2770:. The major difference between diffusion maps and

2758:

2731:

2711:

2684:

2624:

2597:

2570:

2543:

2516:

2467:

2445:

2400:

2377:

2365:In order to faithfully represent a Markov matrix,

2354:

2334:

2298:

2239:

2203:

2144:

2116:

2089:

2062:

2019:

1818:

1749:

1687:

1651:

1627:

1605:

1422:

1396:

1123:

1015:dimensional space by minimizing the cost function

951:

832:

530:

510:

468:

348:

256:

234:

4573:Coifman, Ronald R.; Lafon, Stephane (July 2006).

4516:Advances in Neural Information Processing Systems

4485:Sun, Jigang; Crowe, Malcolm; Fyfe, Colin (2010).

4442:

4204:Advances in neural information processing systems

4164:

3888:Advances in Neural Information Processing Systems

3820:Advances in Neural Information Processing Systems

3144:

1659:represent the distribution of the data points on

1437:

1342:to use other types of closed manifolds, like the

549:

4927:

4743:Rankvisu: Mapping from the neighbourhood network

1819:{\displaystyle k(x,y)\geq 0\qquad \forall x,y,k}

1459:

1203:

4507:Walder, Christian; Schölkopf, Bernhard (2009).

4506:

4307:"Visualizing High-Dimensional Data Using t-SNE"

3751:Journal of the American Statistical Association

3615:

3507:

1157:being the number of data points), whose bottom

175:in this example is 23.23%, for SOM it is 6.86%.

4726:Gashler, M. and Ventura, D. and Martinez, T.,

4201:

3737:

3638:

3462:

3083:involves a sum over of all paths of length t,

2299:{\displaystyle \|x_{i}-x_{j}\|_{2}\ll \sigma }

2204:{\displaystyle \|x_{i}-x_{j}\|_{2}\gg \sigma }

1866:) can be constructed using a Gaussian kernel.

1177:Hessian locally-linear embedding (Hessian LLE)

952:{\displaystyle \sum _{j}{\mathbf {W} _{ij}}=1}

852:refer to the amount of contribution the point

4568:

4566:

4529:Wang, Chang; Mahadevan, Sridhar (July 2008).

4305:van der Maaten, L.J.P.; Hinton, G.E. (2008).

3227:Uniform manifold approximation and projection

3217:Topologically constrained isometric embedding

3212:Topologically constrained isometric embedding

1311:

1228:

28:

4921:Nonlinear PCA by autoencoder neural networks

4572:

4532:Manifold Alignment using Procrustes Analysis

4518:. Vol. 22. MIT Press. pp. 1713–20.

4484:

4019:

3806:

3437:

3033:will be smaller the more paths that connect

2942:

2885:

2818:

2804:

2281:

2254:

2186:

2159:

1935:

1908:

962:The original data points are collected in a

4582:Applied and Computational Harmonic Analysis

3697:- Multidimensional Data Visualization Tool

3511:Temporal Nonlinear Dimensionality Reduction

1301:t-distributed stochastic neighbor embedding

1296:t-distributed stochastic neighbor embedding

673:

4631:

4563:

4091:

3968:

1007:dimensional space is mapped onto a point Y

4802:

4694:

4684:

4669:"Non-linear PCA: a missing data approach"

4618:Diffusion Maps: Applications and Analysis

4438:

4436:

4416:

4390:

4178:

4133:

4115:

4078:

4055:

4045:

3853:

3851:

3654:

3476:

3414:

2524:is the probability of transitioning from

1173:which can be chosen by cross validation.

359:It then projects the data onto the first

4764:International Journal of Computer Vision

4679:(20). Oxford University Press: 3887–95.

4509:"Diffeomorphic Dimensionality Reduction"

4234:

3438:Lee, John A.; Verleysen, Michel (2007).

3394:

3149:Local Multidimensional Scaling performs

2385:must be normalized by the corresponding

1507:leverages the relationship between heat

1190:Modified Locally-Linear Embedding (MLLE)

553:

154:

97:

76:

18:

4257:

3642:International Journal of Neural Systems

1430:the contagion map is equivalent to the

1286:Gaussian process latent variable models

1281:Gaussian process latent variable models

167:with red squares, 20 nodes). The first

65:

4928:

4891:Gaussian Process Latent Variable Model

4845:

4552:Diffusion Maps and Geometric Harmonics

4496:. d-side publications. pp. 81–86.

4478:

4433:

3857:

3848:

3710:

3528:

1472:Diffeomorphic dimensionality reduction

605:

456:

336:

179:

4548:

4097:

3186:

3041:and vice versa. Because the quantity

1487:

139:

4452:IEEE Transactions on Neural Networks

4443:Demartines, P.; Hérault, J. (1997).

4314:Journal of Machine Learning Research

4241:Journal of Machine Learning Research

4167:SIAM Journal on Scientific Computing

3727:from the original on August 2, 2019.

3402:Journal of Machine Learning Research

3170:Data-driven high-dimensional scaling

4614:

3543:

3250:Methods based on proximity matrices

1306:

861:has while reconstructing the point

625:neighboring points (using e.g. the

511:{\displaystyle \Phi (\mathbf {x} )}

208:Kernel principal component analysis

144:

13:

4839:

4330:

3508:Gashler, M.; Martinez, T. (2011).

3440:Nonlinear Dimensionality Reduction

2836:{\displaystyle \{D_{t}\}_{t\in N}}

2685:{\displaystyle P^{t}(x_{i},x_{j})}

1798:

1680:

1585:

1165:nearest neighbors, as measured by

525:

494:

432:

411:

134:nonlinear dimensionality reduction

40:Nonlinear dimensionality reduction

14:

4947:

4869:

3861:Problems of Learning on Manifolds

1847:) one can construct a reversible

1499:

1357:

4621:(Masters). University of Oxford.

4264:IEEE Transactions on Cybernetics

3156:

1621:

1597:

1593:

1527:

1316:Relational perspective map is a

1099:

1084:

1058:

929:

808:

793:

767:

619:Reproducing kernel Hilbert space

501:

440:

419:

325:

313:

250:

192:) and its probabilistic variant

173:Fraction of variance unexplained

4854:. MIT Press. pp. 682–699.

4811:

4790:

4748:

4735:

4720:

4711:

4660:

4625:

4608:

4542:

4522:

4500:

4366:

4324:

4298:

4251:

4228:

4206:. Vol. 5. pp. 580–7.

4195:

4158:

4072:

4020:Donoho, D.; Grimes, C. (2003).

4013:

3962:

3904:

3872:

3858:Belkin, Mikhail (August 2003).

3800:

3770:

3731:

3704:

3687:

3264:metric multidimensional scaling

2475:now represents a Markov chain.

2063:{\displaystyle x_{i}\sim x_{j}}

1797:

1243:

897:is not a neighbor of the point

4916:Short review of Diffusion Maps

4881:Generative Topographic Mapping

4852:Probabilistic Machine Learning

4696:11858/00-001M-0000-0014-2B1F-2

3764:10.1080/01621459.1989.10478797

3632:

3609:

3566:

3537:

3521:

3501:

3456:

3431:

3388:

3145:Local multidimensional scaling

3070:

3058:

3020:

3008:

2938:

2926:

2910:

2898:

2879:

2867:

2679:

2653:

2517:{\displaystyle P(x_{i},x_{j})}

2511:

2485:

1788:

1776:

1750:{\displaystyle k(x,y)=k(y,x),}

1741:

1729:

1720:

1708:

1579:

1534:

1443:Curvilinear component analysis

1438:Curvilinear component analysis

1391:

1379:

1275:radial basis function networks

1034:

1028:

743:

737:

550:Principal curves and manifolds

505:

497:

451:

435:

429:

414:

194:generative topographic mapping

1:

4686:10.1093/bioinformatics/bti634

3991:10.1126/science.290.5500.2323

3940:10.1126/science.290.5500.2319

3714:Principal Curves and Surfaces

3381:

1695:has the following properties

1688:{\displaystyle {\mathit {k}}}

1460:Curvilinear distance analysis

1259:restricted Boltzmann machines

1210:Local tangent space alignment

1204:Local tangent space alignment

136:techniques are listed below.

4646:10.1016/j.neunet.2006.05.014

4549:Lafon, Stephane (May 2004).

4352:10.1057/palgrave.ivs.9500051

4079:Zhang, Z.; Wang, J. (2006).

3741:; Stuetzle, W. (June 1989).

3711:Hastie, T. (November 1984).

2772:principal component analysis

1628:{\displaystyle \mathbf {d} }

1479:Dimensionality Reduction or

627:k-nearest neighbor algorithm

257:{\displaystyle \mathbf {X} }

108:principal component analysis

60:principal component analysis

56:singular value decomposition

7:

3890:. Vol. 16. MIT Press.

3369:Growing self-organizing map

3327:

3285:

3202:

3161:Nonlinear PCA (NLPCA) uses

3141:or even geodesic distance.

1639:represent the data set and

132:Some of the more prominent

126:spectral submanifolds (SSM)

10:

4952:

4901:Relational Perspective Map

4594:10.1016/j.acha.2006.04.006

4258:Ding, M.; Fan, G. (2015).

4126:10.1109/JTEHM.2019.2936348

3595:10.1162/089976698300017467

3573:Schölkopf, B.; Smola, A.;

3533:. University of Leicester.

3276:maximum variance unfolding

3076:{\displaystyle D_{t}(x,y)}

3026:{\displaystyle D_{t}(x,y)}

2446:{\displaystyle P=D^{-1}K.}

1312:Relational perspective map

1234:Maximum Variance Unfolding

1229:Maximum variance unfolding

1207:

609:

4846:Murphy, Kevin P. (2022).

4823:umap-learn.readthedocs.io

4776:10.1007/s11263-010-0322-1

4340:Information Visualization

4276:10.1109/TCYB.2014.2373393

4189:10.1137/s1064827502419154

3665:10.1142/S0129065710002383

3487:10.1007/s11071-016-2974-z

3395:Lawrence, Neil D (2012).

3349:Whitney embedding theorem

3272:locally linear embeddings

2097:is a nearest neighbor of

1832:is positivity preserving

641:

631:Laplace–Beltrami operator

235:{\displaystyle m\times n}

4896:Locally Linear Embedding

4026:Proc Natl Acad Sci U S A

3191:Manifold Sculpting uses

3181:concentration of measure

3151:multidimensional scaling

2335:{\displaystyle K_{ij}=1}

2240:{\displaystyle K_{ij}=0}

1318:multidimensional scaling

1267:multidimensional scaling

679:Locally-linear Embedding

674:Locally-linear embedding

655:Multidimensional Scaling

651:Floyd–Warshall algorithm

649:is a combination of the

564:gross product per capita

92:intrinsic dimensionality

52:dimensionality reduction

4047:10.1073/pnas.1031596100

3626:10.1145/1015330.1015417

2636:time steps is given by

2355:{\displaystyle \sigma }

2145:{\displaystyle \sigma }

2030:In the above equation,

1854:For example, the graph

698:based on its neighbors

612:Manifold regularization

3826:. MIT Press: 586–691.

3193:graduated optimization

3131:

3104:

3077:

3027:

2985:

2955:

2837:

2790:

2760:

2733:

2713:

2686:

2626:

2599:

2572:

2545:

2518:

2469:

2447:

2402:

2379:

2356:

2336:

2300:

2241:

2205:

2146:

2118:

2091:

2064:

2021:

1820:

1751:

1689:

1653:

1629:

1607:

1424:

1398:

1354:, as image manifolds.

1125:

987:that reconstructs the

953:

834:

587:

532:

512:

470:

409:

350:

309:

258:

236:

176:

103:

82:

36:

4886:Mike Tipping's Thesis

4379:Nature Communications

4331:Li, James X. (2004).

4235:Lawrence, N. (2005).

4098:Sidhu, Gagan (2019).

3529:Mirkes, E.M. (2011).

3354:Discriminant analysis

3132:

3130:{\displaystyle D_{t}}

3105:

3103:{\displaystyle D_{t}}

3078:

3028:

2986:

2984:{\displaystyle D_{t}}

2956:

2838:

2791:

2761:

2739:multiplied by itself

2734:

2714:

2712:{\displaystyle P^{t}}

2687:

2627:

2625:{\displaystyle x_{j}}

2600:

2598:{\displaystyle x_{i}}

2573:

2571:{\displaystyle x_{j}}

2546:

2544:{\displaystyle x_{i}}

2519:

2470:

2448:

2403:

2380:

2357:

2337:

2301:

2242:

2206:

2147:

2119:

2117:{\displaystyle x_{j}}

2092:

2090:{\displaystyle x_{i}}

2065:

2022:

1821:

1752:

1690:

1654:

1630:

1608:

1425:

1399:

1397:{\displaystyle t\in }

1364:Global cascades model

1197:orthogonal projection

1126:

991:th data point in the

954:

835:

557:

533:

531:{\displaystyle \Phi }

513:

471:

389:

351:

289:

259:

237:

198:latent variable model

158:

101:

80:

22:

3339:Spectral submanifold

3307:The method has also

3114:

3087:

3045:

2995:

2968:

2849:

2801:

2780:

2750:

2723:

2696:

2640:

2609:

2582:

2555:

2528:

2479:

2459:

2415:

2392:

2369:

2346:

2310:

2251:

2215:

2156:

2136:

2101:

2074:

2034:

1873:

1770:

1702:

1675:

1663:. Further, define a

1652:{\displaystyle \mu }

1643:

1617:

1523:

1408:

1370:

1022:

913:

888:to be zero if point

731:

522:

491:

370:

270:

246:

220:

66:Applications of NLDR

4936:Dimension reduction

4848:"Manifold Learning"

4401:2015NatCo...6.7723T

4038:2003PNAS..100.5591D

3983:2000Sci...290.2323R

3932:2000Sci...290.2319T

3544:Yin, Hujun (2007).

3425:2010arXiv1010.4830L

3375:Self-organizing map

3334:Manifold hypothesis

3240:Riemannian manifold

3221:stress majorization

2866:

1948:

1423:{\displaystyle t=0}

1151:eigen value problem

978:. The same weights

606:Laplacian eigenmaps

341:

186:self-organizing map

180:Self-organizing map

169:principal component

118:Invariant manifolds

4409:10.1038/ncomms8723

3743:"Principal Curves"

3579:Neural Computation

3465:Nonlinear Dynamics

3187:Manifold sculpting

3139:Euclidean distance

3127:

3100:

3073:

3023:

2981:

2951:

2852:

2833:

2786:

2756:

2746:The Markov matrix

2729:

2709:

2682:

2622:

2595:

2568:

2541:

2514:

2465:

2443:

2398:

2375:

2352:

2332:

2296:

2237:

2201:

2142:

2114:

2087:

2060:

2017:

2012:

1934:

1816:

1747:

1685:

1649:

1625:

1603:

1493:Manifold alignment

1488:Manifold alignment

1466:geodesic distances

1420:

1394:

1252:is a feed-forward

1167:Euclidean distance

1121:

1080:

1049:

949:

925:

830:

789:

758:

659:geodesic distances

588:

528:

508:

466:

346:

323:

254:

232:

188:(SOM, also called

177:

140:Important concepts

106:By comparison, if

104:

83:

37:

4911:RankVisu homepage

4861:978-0-262-04682-4

4464:10.1109/72.554199

3926:(5500): 2319–23.

3807:Belkin, Mikhail;

3793:978-3-540-73749-0

3559:978-3-540-73749-0

3449:978-0-387-39350-6

3256:similarity matrix

3244:Riemannian metric

3237:locally connected

2789:{\displaystyle K}

2759:{\displaystyle P}

2732:{\displaystyle P}

2468:{\displaystyle P}

2401:{\displaystyle D}

2378:{\displaystyle K}

2362:can be found in.

2008:

1971:

1453:have to be made.

1071:

1040:

916:

780:

749:

387:

287:

122:dynamical systems

44:manifold learning

4943:

4865:

4833:

4832:

4830:

4829:

4815:

4809:

4808:

4806:

4794:

4788:

4787:

4761:

4752:

4746:

4739:

4733:

4724:

4718:

4715:

4709:

4708:

4698:

4688:

4664:

4658:

4657:

4640:(6–7): 889–899.

4629:

4623:

4622:

4615:Bah, B. (2008).

4612:

4606:

4605:

4579:

4575:"Diffusion Maps"

4570:

4561:

4560:

4546:

4540:

4539:

4537:

4526:

4520:

4519:

4513:

4504:

4498:

4497:

4491:

4482:

4476:

4475:

4449:

4440:

4431:

4430:

4420:

4394:

4370:

4364:

4363:

4337:

4328:

4322:

4321:

4311:

4302:

4296:

4295:

4255:

4249:

4248:

4232:

4226:

4225:

4199:

4193:

4192:

4182:

4162:

4156:

4155:

4137:

4119:

4095:

4089:

4088:

4076:

4070:

4069:

4059:

4049:

4017:

4011:

4010:

3977:(5500): 2323–6.

3966:

3960:

3959:

3917:

3908:

3902:

3901:

3885:

3876:

3870:

3865:

3855:

3846:

3845:

3817:

3804:

3798:

3797:

3774:

3768:

3767:

3747:

3735:

3729:

3728:

3726:

3719:

3708:

3702:

3691:

3685:

3684:

3658:

3636:

3630:

3629:

3613:

3607:

3606:

3570:

3564:

3563:

3541:

3535:

3534:

3525:

3519:

3518:

3516:

3505:

3499:

3498:

3480:

3471:(3): 1493–1534.

3460:

3454:

3453:

3435:

3429:

3428:

3418:

3409:(May): 1609–38.

3392:

3364:Feature learning

3309:been implemented

3176:Sammon's mapping

3136:

3134:

3133:

3128:

3126:

3125:

3109:

3107:

3106:

3101:

3099:

3098:

3082:

3080:

3079:

3074:

3057:

3056:

3032:

3030:

3029:

3024:

3007:

3006:

2990:

2988:

2987:

2982:

2980:

2979:

2960:

2958:

2957:

2952:

2950:

2949:

2925:

2924:

2897:

2896:

2865:

2860:

2842:

2840:

2839:

2834:

2832:

2831:

2816:

2815:

2795:

2793:

2792:

2787:

2765:

2763:

2762:

2757:

2738:

2736:

2735:

2730:

2718:

2716:

2715:

2710:

2708:

2707:

2691:

2689:

2688:

2683:

2678:

2677:

2665:

2664:

2652:

2651:

2631:

2629:

2628:

2623:

2621:

2620:

2604:

2602:

2601:

2596:

2594:

2593:

2577:

2575:

2574:

2569:

2567:

2566:

2550:

2548:

2547:

2542:

2540:

2539:

2523:

2521:

2520:

2515:

2510:

2509:

2497:

2496:

2474:

2472:

2471:

2466:

2452:

2450:

2449:

2444:

2436:

2435:

2407:

2405:

2404:

2399:

2384:

2382:

2381:

2376:

2361:

2359:

2358:

2353:

2341:

2339:

2338:

2333:

2325:

2324:

2305:

2303:

2302:

2297:

2289:

2288:

2279:

2278:

2266:

2265:

2246:

2244:

2243:

2238:

2230:

2229:

2210:

2208:

2207:

2202:

2194:

2193:

2184:

2183:

2171:

2170:

2151:

2149:

2148:

2143:

2123:

2121:

2120:

2115:

2113:

2112:

2096:

2094:

2093:

2088:

2086:

2085:

2069:

2067:

2066:

2061:

2059:

2058:

2046:

2045:

2026:

2024:

2023:

2018:

2016:

2015:

2009:

2006:

1995:

1994:

1982:

1981:

1972:

1969:

1965:

1964:

1963:

1962:

1953:

1947:

1942:

1933:

1932:

1920:

1919:

1888:

1887:

1825:

1823:

1822:

1817:

1756:

1754:

1753:

1748:

1694:

1692:

1691:

1686:

1684:

1683:

1658:

1656:

1655:

1650:

1634:

1632:

1631:

1626:

1624:

1612:

1610:

1609:

1604:

1602:

1601:

1600:

1578:

1577:

1559:

1558:

1546:

1545:

1530:

1447:Sammon's mapping

1429:

1427:

1426:

1421:

1403:

1401:

1400:

1395:

1348:projective space

1307:Other algorithms

1290:Gaussian process

1130:

1128:

1127:

1122:

1120:

1119:

1114:

1110:

1109:

1108:

1107:

1102:

1096:

1095:

1087:

1079:

1067:

1066:

1061:

1048:

958:

956:

955:

950:

942:

941:

940:

932:

924:

839:

837:

836:

831:

829:

828:

823:

819:

818:

817:

816:

811:

805:

804:

796:

788:

776:

775:

770:

757:

592:Principal curves

572:infant mortality

537:

535:

534:

529:

517:

515:

514:

509:

504:

475:

473:

472:

467:

462:

461:

460:

459:

449:

448:

443:

428:

427:

422:

408:

403:

388:

380:

355:

353:

352:

347:

342:

340:

339:

333:

328:

322:

321:

316:

308:

303:

288:

280:

263:

261:

260:

255:

253:

241:

239:

238:

233:

202:density networks

150:Sammon's mapping

145:Sammon's mapping

48:latent manifolds

42:, also known as

4951:

4950:

4946:

4945:

4944:

4942:

4941:

4940:

4926:

4925:

4906:DD-HDS homepage

4872:

4862:

4842:

4840:Further reading

4837:

4836:

4827:

4825:

4817:

4816:

4812:

4795:

4791:

4759:

4753:

4749:

4740:

4736:

4725:

4721:

4716:

4712:

4665:

4661:

4634:Neural Networks

4630:

4626:

4613:

4609:

4577:

4571:

4564:

4557:Yale University

4547:

4543:

4535:

4527:

4523:

4511:

4505:

4501:

4489:

4483:

4479:

4447:

4441:

4434:

4371:

4367:

4335:

4329:

4325:

4309:

4303:

4299:

4270:(11): 2413–24.

4256:

4252:

4233:

4229:

4214:

4200:

4196:

4180:10.1.1.211.9957

4163:

4159:

4096:

4092:

4077:

4073:

4018:

4014:

3967:

3963:

3915:

3909:

3905:

3898:

3883:

3877:

3873:

3856:

3849:

3834:

3815:

3805:

3801:

3794:

3775:

3771:

3745:

3736:

3732:

3724:

3717:

3709:

3705:

3692:

3688:

3637:

3633:

3614:

3610:

3571:

3567:

3560:

3542:

3538:

3526:

3522:

3514:

3506:

3502:

3461:

3457:

3450:

3436:

3432:

3393:

3389:

3384:

3344:Taken's theorem

3330:

3288:

3260:distance matrix

3252:

3229:

3214:

3205:

3189:

3172:

3163:backpropagation

3159:

3147:

3121:

3117:

3115:

3112:

3111:

3094:

3090:

3088:

3085:

3084:

3052:

3048:

3046:

3043:

3042:

3002:

2998:

2996:

2993:

2992:

2975:

2971:

2969:

2966:

2965:

2945:

2941:

2920:

2916:

2892:

2888:

2861:

2856:

2850:

2847:

2846:

2821:

2817:

2811:

2807:

2802:

2799:

2798:

2781:

2778:

2777:

2751:

2748:

2747:

2724:

2721:

2720:

2703:

2699:

2697:

2694:

2693:

2673:

2669:

2660:

2656:

2647:

2643:

2641:

2638:

2637:

2616:

2612:

2610:

2607:

2606:

2589:

2585:

2583:

2580:

2579:

2562:

2558:

2556:

2553:

2552:

2535:

2531:

2529:

2526:

2525:

2505:

2501:

2492:

2488:

2480:

2477:

2476:

2460:

2457:

2456:

2428:

2424:

2416:

2413:

2412:

2393:

2390:

2389:

2370:

2367:

2366:

2347:

2344:

2343:

2317:

2313:

2311:

2308:

2307:

2284:

2280:

2274:

2270:

2261:

2257:

2252:

2249:

2248:

2222:

2218:

2216:

2213:

2212:

2189:

2185:

2179:

2175:

2166:

2162:

2157:

2154:

2153:

2137:

2134:

2133:

2108:

2104:

2102:

2099:

2098:

2081:

2077:

2075:

2072:

2071:

2054:

2050:

2041:

2037:

2035:

2032:

2031:

2011:

2010:

2005:

2003:

1997:

1996:

1990:

1986:

1977:

1973:

1968:

1966:

1958:

1954:

1949:

1943:

1938:

1928:

1924:

1915:

1911:

1904:

1900:

1893:

1892:

1880:

1876:

1874:

1871:

1870:

1771:

1768:

1767:

1703:

1700:

1699:

1679:

1678:

1676:

1673:

1672:

1644:

1641:

1640:

1620:

1618:

1615:

1614:

1596:

1592:

1591:

1573:

1569:

1554:

1550:

1541:

1537:

1526:

1524:

1521:

1520:

1502:

1490:

1474:

1462:

1440:

1409:

1406:

1405:

1371:

1368:

1367:

1360:

1314:

1309:

1298:

1283:

1271:Sammon mappings

1246:

1231:

1212:

1206:

1192:

1179:

1141:

1137:

1115:

1103:

1098:

1097:

1088:

1083:

1082:

1081:

1075:

1062:

1057:

1056:

1055:

1051:

1050:

1044:

1023:

1020:

1019:

1010:

1002:

986:

933:

928:

927:

926:

920:

914:

911:

910:

905:

896:

887:

878:

869:

860:

851:

824:

812:

807:

806:

797:

792:

791:

790:

784:

771:

766:

765:

764:

760:

759:

753:

732:

729:

728:

715:

706:

697:

676:

644:

614:

608:

580:principal curve

568:life expectancy

552:

523:

520:

519:

500:

492:

489:

488:

455:

454:

450:

444:

439:

438:

423:

418:

417:

410:

404:

393:

379:

371:

368:

367:

335:

334:

329:

324:

317:

312:

311:

310:

304:

293:

279:

271:

268:

267:

249:

247:

244:

243:

221:

218:

217:

210:

182:

147:

142:

74:

68:

17:

12:

11:

5:

4949:

4939:

4938:

4924:

4923:

4918:

4913:

4908:

4903:

4898:

4893:

4888:

4883:

4878:

4871:

4870:External links

4868:

4867:

4866:

4860:

4841:

4838:

4835:

4834:

4810:

4789:

4747:

4734:

4719:

4710:

4673:Bioinformatics

4659:

4624:

4607:

4562:

4541:

4521:

4499:

4477:

4458:(1): 148–154.

4432:

4365:

4323:

4297:

4250:

4227:

4212:

4194:

4173:(1): 313–338.

4157:

4090:

4071:

4032:(10): 5591–6.

4012:

3961:

3903:

3896:

3871:

3868:Ohio-state.edu

3847:

3832:

3809:Niyogi, Partha

3799:

3792:

3769:

3758:(406): 502–6.

3730:

3703:

3699:Institut Curie

3686:

3649:(3): 219–232.

3631:

3608:

3565:

3558:

3536:

3520:

3500:

3455:

3448:

3430:

3386:

3385:

3383:

3380:

3379:

3378:

3372:

3366:

3361:

3356:

3351:

3346:

3341:

3336:

3329:

3326:

3325:

3324:

3305:

3295:

3287:

3284:

3280:Sammon mapping

3251:

3248:

3228:

3225:

3223:that follows.

3213:

3210:

3204:

3201:

3188:

3185:

3171:

3168:

3158:

3155:

3146:

3143:

3124:

3120:

3097:

3093:

3072:

3069:

3066:

3063:

3060:

3055:

3051:

3022:

3019:

3016:

3013:

3010:

3005:

3001:

2978:

2974:

2962:

2961:

2948:

2944:

2940:

2937:

2934:

2931:

2928:

2923:

2919:

2915:

2912:

2909:

2906:

2903:

2900:

2895:

2891:

2887:

2884:

2881:

2878:

2875:

2872:

2869:

2864:

2859:

2855:

2830:

2827:

2824:

2820:

2814:

2810:

2806:

2785:

2755:

2728:

2719:is the matrix

2706:

2702:

2681:

2676:

2672:

2668:

2663:

2659:

2655:

2650:

2646:

2619:

2615:

2592:

2588:

2565:

2561:

2538:

2534:

2513:

2508:

2504:

2500:

2495:

2491:

2487:

2484:

2464:

2454:

2453:

2442:

2439:

2434:

2431:

2427:

2423:

2420:

2397:

2374:

2351:

2331:

2328:

2323:

2320:

2316:

2295:

2292:

2287:

2283:

2277:

2273:

2269:

2264:

2260:

2256:

2236:

2233:

2228:

2225:

2221:

2200:

2197:

2192:

2188:

2182:

2178:

2174:

2169:

2165:

2161:

2141:

2111:

2107:

2084:

2080:

2057:

2053:

2049:

2044:

2040:

2028:

2027:

2014:

2004:

2002:

1999:

1998:

1993:

1989:

1985:

1980:

1976:

1967:

1961:

1957:

1952:

1946:

1941:

1937:

1931:

1927:

1923:

1918:

1914:

1910:

1907:

1903:

1899:

1898:

1896:

1891:

1886:

1883:

1879:

1827:

1826:

1815:

1812:

1809:

1806:

1803:

1800:

1796:

1793:

1790:

1787:

1784:

1781:

1778:

1775:

1758:

1757:

1746:

1743:

1740:

1737:

1734:

1731:

1728:

1725:

1722:

1719:

1716:

1713:

1710:

1707:

1682:

1648:

1623:

1599:

1595:

1590:

1587:

1584:

1581:

1576:

1572:

1568:

1565:

1562:

1557:

1553:

1549:

1544:

1540:

1536:

1533:

1529:

1505:Diffusion maps

1501:

1500:Diffusion maps

1498:

1489:

1486:

1473:

1470:

1461:

1458:

1439:

1436:

1419:

1416:

1413:

1393:

1390:

1387:

1384:

1381:

1378:

1375:

1359:

1358:Contagion maps

1356:

1313:

1310:

1308:

1305:

1297:

1294:

1282:

1279:

1254:neural network

1245:

1242:

1230:

1227:

1208:Main article:

1205:

1202:

1191:

1188:

1178:

1175:

1139:

1135:

1132:

1131:

1118:

1113:

1106:

1101:

1094:

1091:

1086:

1078:

1074:

1070:

1065:

1060:

1054:

1047:

1043:

1039:

1036:

1033:

1030:

1027:

1008:

1000:

982:

960:

959:

948:

945:

939:

936:

931:

923:

919:

901:

892:

883:

874:

865:

856:

847:

841:

840:

827:

822:

815:

810:

803:

800:

795:

787:

783:

779:

774:

769:

763:

756:

752:

748:

745:

742:

739:

736:

711:

702:

693:

675:

672:

643:

640:

635:Fourier series

607:

604:

551:

548:

527:

507:

503:

499:

496:

477:

476:

465:

458:

453:

447:

442:

437:

434:

431:

426:

421:

416:

413:

407:

402:

399:

396:

392:

386:

383:

378:

375:

357:

356:

345:

338:

332:

327:

320:

315:

307:

302:

299:

296:

292:

286:

283:

278:

275:

252:

231:

228:

225:

209:

206:

181:

178:

146:

143:

141:

138:

67:

64:

15:

9:

6:

4:

3:

2:

4948:

4937:

4934:

4933:

4931:

4922:

4919:

4917:

4914:

4912:

4909:

4907:

4904:

4902:

4899:

4897:

4894:

4892:

4889:

4887:

4884:

4882:

4879:

4877:

4874:

4873:

4863:

4857:

4853:

4849:

4844:

4843:

4824:

4820:

4814:

4805:

4800:

4793:

4785:

4781:

4777:

4773:

4769:

4765:

4758:

4751:

4744:

4738:

4731:

4730:

4723:

4714:

4706:

4702:

4697:

4692:

4687:

4682:

4678:

4674:

4670:

4663:

4655:

4651:

4647:

4643:

4639:

4635:

4628:

4620:

4619:

4611:

4603:

4599:

4595:

4591:

4587:

4583:

4576:

4569:

4567:

4558:

4554:

4553:

4545:

4534:

4533:

4525:

4517:

4510:

4503:

4495:

4488:

4481:

4473:

4469:

4465:

4461:

4457:

4453:

4446:

4439:

4437:

4428:

4424:

4419:

4414:

4410:

4406:

4402:

4398:

4393:

4388:

4384:

4380:

4376:

4369:

4361:

4357:

4353:

4349:

4345:

4341:

4334:

4327:

4319:

4315:

4308:

4301:

4293:

4289:

4285:

4281:

4277:

4273:

4269:

4265:

4261:

4254:

4246:

4242:

4238:

4231:

4223:

4219:

4215:

4209:

4205:

4198:

4190:

4186:

4181:

4176:

4172:

4168:

4161:

4153:

4149:

4145:

4141:

4136:

4131:

4127:

4123:

4118:

4113:

4109:

4105:

4101:

4094:

4086:

4082:

4075:

4067:

4063:

4058:

4053:

4048:

4043:

4039:

4035:

4031:

4027:

4023:

4016:

4008:

4004:

4000:

3996:

3992:

3988:

3984:

3980:

3976:

3972:

3965:

3957:

3953:

3949:

3945:

3941:

3937:

3933:

3929:

3925:

3921:

3914:

3907:

3899:

3897:0-262-20152-6

3893:

3889:

3882:

3875:

3869:

3863:

3862:

3854:

3852:

3843:

3839:

3835:

3833:0-262-27173-7

3829:

3825:

3821:

3814:

3810:

3803:

3795:

3789:

3785:

3784:

3779:

3778:Gorban, A. N.

3773:

3765:

3761:

3757:

3753:

3752:

3744:

3740:

3734:

3723:

3716:

3715:

3707:

3700:

3696:

3693:A. Zinovyev,

3690:

3682:

3678:

3674:

3670:

3666:

3662:

3657:

3652:

3648:

3644:

3643:

3635:

3627:

3623:

3619:

3612:

3604:

3600: