64:

184:

Loop interchange on this example can improve the cache performance of accessing b(j,i), but it will ruin the reuse of a(i) and c(i) in the inner loop, as it introduces two extra loads (for a(i) and for c(i)) and one extra store (for a(i)) during each iteration. As a result, the overall performance

80:

occur if the contiguously accessed array elements within the loop come from a different cache block, and loop interchange can help prevent this. The effectiveness of loop interchange depends on and must be considered in light of the cache model used by the underlying hardware and the array model

75:

when accessing array elements. When a processor accesses an array element for the first time, it will retrieve an entire block of data from memory to cache. That block is likely to have many more consecutive elements after the first one, so on the next array element access, it will be brought

193:

It is not always safe to exchange the iteration variables due to dependencies between statements for the order in which they must execute. To determine whether a compiler can safely interchange loops,

28:. The variable used in the inner loop switches to the outer loop, and vice versa. It is often done to ensure that the elements of a multi-dimensional

329:

476:

102:. Thus the order of two iteration variables in the first example is suitable for a C program while the second example is better for FORTRAN.

510:

114:

Loop interchange may lead to worse performance because cache performance is only part of the story. Take the following example:

290:

322:

682:

559:

460:

266:

708:

601:

315:

106:

can detect the improper ordering by programmers and interchange the order to achieve better cache performance.

496:

580:

631:

596:

520:

395:

278:

375:

85:

380:

216:

52:

528:

505:

481:

410:

657:

652:

606:

533:

357:

352:

33:

687:

611:

455:

241:

29:

8:

667:

538:

430:

338:

194:

103:

51:

On occasion, such a transformation may create opportunities to further optimize, such as

662:

626:

445:

435:

385:

543:

367:

296:

286:

677:

616:

486:

465:

425:

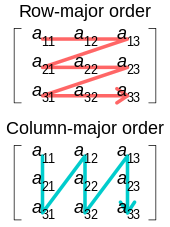

88:, array elements in the same row are stored consecutively in memory (a, a, a) ‒ in

25:

24:

is the process of exchanging the order of two iteration variables used by a nested

672:

90:

17:

77:

647:

621:

420:

415:

400:

274:

221:

206:

702:

98:

programs store array elements from the same column together (a, a, a), using

76:

directly from cache (which is faster than getting it from slow main memory).

63:

211:

271:

Optimizing

Compilers for Modern Architectures: A Dependence-Based Approach

390:

307:

32:

are accessed in the order in which they are present in memory, improving

470:

564:

72:

282:

300:

71:

The major purpose of loop interchange is to take advantage of the

95:

48:

for i from 0 to 10 for j from 0 to 20 a = i + j

42:

for j from 0 to 20 for i from 0 to 10 a = i + j

58:

700:

477:Induction variable recognition and elimination

323:

265:

249:Parallel Programming Guide for HP-UX Systems

67:Illustration of row- and column-major order

337:

330:

316:

185:may be degraded after loop interchange.

62:

511:Sparse conditional constant propagation

701:

273:(2011 digital print of 1st ed.).

311:

39:For example, in the code fragment:

13:

259:

45:loop interchange would result in:

14:

720:

461:Common subexpression elimination

602:Compile-time function execution

59:The utility of loop interchange

234:

1:

227:

581:Interprocedural optimization

7:

632:Profile-guided optimization

597:Bounds-checking elimination

200:

10:

725:

396:Loop-invariant code motion

279:Morgan Kaufmann Publishers

55:of the array assignments.

640:

589:

573:

552:

519:

495:

444:

376:Automatic parallelization

366:

345:

188:

109:

116:

381:Automatic vectorization

269:; Allen, Randy (2002).

217:Loop fission and fusion

53:automatic vectorization

709:Compiler optimizations

529:Instruction scheduling

506:Global value numbering

482:Live-variable analysis

411:Loop nest optimization

339:Compiler optimizations

86:C programming language

81:used by the compiler.

68:

658:Control-flow analysis

653:Array-access analysis

607:Dead-code elimination

565:Tail-call elimination

534:Instruction selection

358:Local value numbering

353:Peephole optimization

94:. On the other hand,

66:

34:locality of reference

688:Value range analysis

612:Expression templates

456:Available expression

104:Optimizing compilers

668:Dependence analysis

539:Register allocation

431:Software pipelining

195:dependence analysis

663:Data-flow analysis

627:Partial evaluation

436:Strength reduction

386:Induction variable

251:. HP. August 2003.

242:"Loop interchange"

69:

696:

695:

544:Rematerialization

292:978-1-55860-286-1

716:

678:Pointer analysis

617:Inline expansion

487:Use-define chain

466:Constant folding

426:Loop unswitching

406:Loop interchange

332:

325:

318:

309:

308:

304:

253:

252:

246:

238:

180:

177:

174:

171:

168:

165:

162:

159:

156:

153:

150:

147:

144:

141:

138:

135:

132:

129:

126:

123:

120:

22:loop interchange

724:

723:

719:

718:

717:

715:

714:

713:

699:

698:

697:

692:

673:Escape analysis

641:Static analysis

636:

585:

569:

548:

521:Code generation

515:

491:

447:

440:

362:

341:

336:

293:

262:

260:Further reading

257:

256:

244:

240:

239:

235:

230:

203:

191:

182:

181:

178:

175:

172:

169:

166:

163:

160:

157:

154:

151:

148:

145:

142:

139:

136:

133:

130:

127:

124:

121:

118:

112:

91:row-major order

61:

49:

43:

18:compiler theory

12:

11:

5:

722:

712:

711:

694:

693:

691:

690:

685:

683:Shape analysis

680:

675:

670:

665:

660:

655:

650:

648:Alias analysis

644:

642:

638:

637:

635:

634:

629:

624:

622:Jump threading

619:

614:

609:

604:

599:

593:

591:

587:

586:

584:

583:

577:

575:

571:

570:

568:

567:

562:

556:

554:

550:

549:

547:

546:

541:

536:

531:

525:

523:

517:

516:

514:

513:

508:

502:

500:

493:

492:

490:

489:

484:

479:

474:

468:

463:

458:

452:

450:

442:

441:

439:

438:

433:

428:

423:

421:Loop unrolling

418:

416:Loop splitting

413:

408:

403:

401:Loop inversion

398:

393:

388:

383:

378:

372:

370:

364:

363:

361:

360:

355:

349:

347:

343:

342:

335:

334:

327:

320:

312:

306:

305:

291:

275:Academic Press

261:

258:

255:

254:

232:

231:

229:

226:

225:

224:

222:Loop unrolling

219:

214:

209:

207:Loop splitting

202:

199:

190:

187:

117:

111:

108:

60:

57:

47:

41:

9:

6:

4:

3:

2:

721:

710:

707:

706:

704:

689:

686:

684:

681:

679:

676:

674:

671:

669:

666:

664:

661:

659:

656:

654:

651:

649:

646:

645:

643:

639:

633:

630:

628:

625:

623:

620:

618:

615:

613:

610:

608:

605:

603:

600:

598:

595:

594:

592:

588:

582:

579:

578:

576:

572:

566:

563:

561:

560:Deforestation

558:

557:

555:

551:

545:

542:

540:

537:

535:

532:

530:

527:

526:

524:

522:

518:

512:

509:

507:

504:

503:

501:

498:

494:

488:

485:

483:

480:

478:

475:

472:

469:

467:

464:

462:

459:

457:

454:

453:

451:

449:

443:

437:

434:

432:

429:

427:

424:

422:

419:

417:

414:

412:

409:

407:

404:

402:

399:

397:

394:

392:

389:

387:

384:

382:

379:

377:

374:

373:

371:

369:

365:

359:

356:

354:

351:

350:

348:

344:

340:

333:

328:

326:

321:

319:

314:

313:

310:

302:

298:

294:

288:

284:

280:

276:

272:

268:

264:

263:

250:

243:

237:

233:

223:

220:

218:

215:

213:

210:

208:

205:

204:

198:

197:is required.

196:

186:

115:

107:

105:

101:

97:

93:

92:

87:

82:

79:

74:

65:

56:

54:

46:

40:

37:

35:

31:

27:

23:

19:

405:

270:

267:Kennedy, Ken

248:

236:

212:Loop skewing

192:

183:

113:

100:column-major

99:

89:

83:

78:Cache misses

70:

50:

44:

38:

21:

15:

473:elimination

391:Loop fusion

346:Basic block

553:Functional

471:Dead store

301:2001092381

228:References

446:Data-flow

73:CPU cache

703:Category

448:analysis

283:Elsevier

201:See also

96:FORTRAN

574:Global

499:-based

299:

289:

189:Safety

179:end do

176:end do

110:Caveat

590:Other

245:(PDF)

134:10000

30:array

368:Loop

297:LCCN

287:ISBN

152:1000

26:loop

497:SSA

137:do

119:do

84:In

16:In

705::

295:.

285:.

281:/

277:/

247:.

36:.

20:,

331:e

324:t

317:v

303:.

173:c

170:*

167:b

164:+

161:a

158:=

155:a

149:,

146:1

143:=

140:j

131:,

128:1

125:=

122:i

Text is available under the Creative Commons Attribution-ShareAlike License. Additional terms may apply.